How has society allowed customer service to degrade so badly? Honestly, it’s just so bad now – endless loops of “press 1” and ridiculous chatbots that simply don’t work.

It’s terrible everywhere, but in the Philippines, it’s next level. This week, I’ve made six attempts, over a 48 hour period, to have Globe – one of the two biggest telecommunications companies here – answer the simplest of questions:

Which countries are included in the new Roam Surf packages?

Sidebar

While writing this rant, it dawned on me that other people with the same question might stumble onto this post. If that’s you, scroll to the bottom – I’ve put the full list of eligible Roam Surf countries there.

Now, back to the tirade.

Digital Nomad

I’m about to go on a six week trip that covers Japan, Aruba and the USA. My usual digital nomad-ery of football, flights and freelancing.

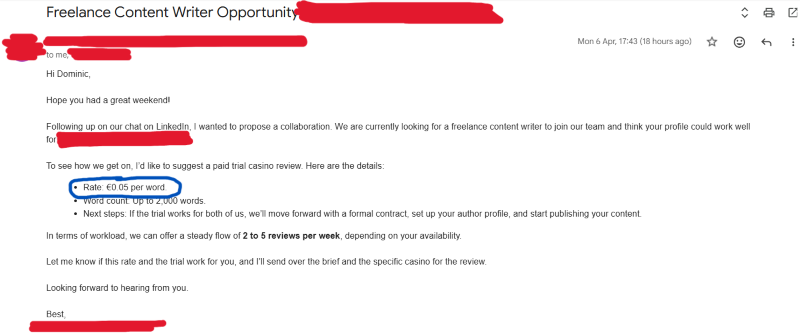

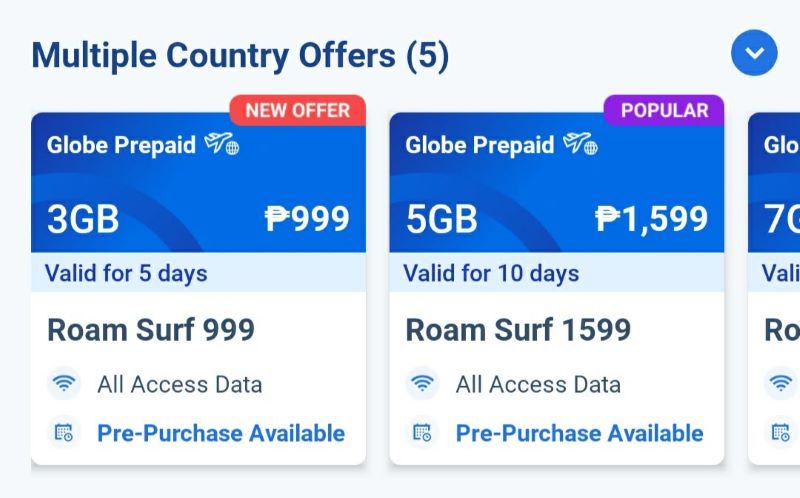

For once, Globe has come up with a really nice product – Roam Surf – which gives you data in “multiple countries”. Normally, you have to buy a roaming package for each individual country, so I welcome this news.

But the thing is, they don’t tell you anywhere on their website – or within either of their apps – which countries are included in the Roam Surf packages. After an extensive search, the best I could find was that it’s “120+ countries”. Does that include Aruba? I’ve no idea.

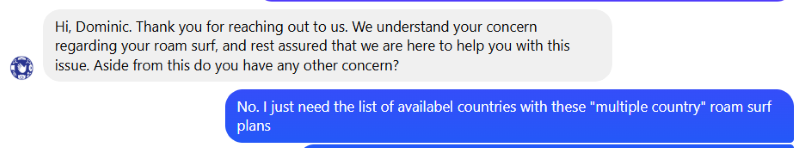

So I tried to contact support.

LOL

This is a simple question that should take seconds to answer. They can either point me to the published list of Roam Surf countries that I’m obviously missing, or they can at least just tell me whether or not Aruba is included.

Wrong. Nothing is ever easy when trying to get customer support in the Philippines.

48 Hours for Globe to Answer a Simple Question

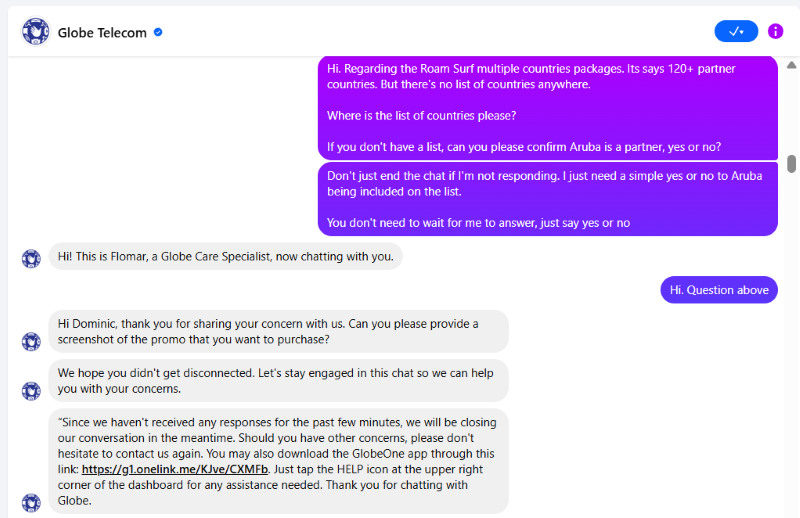

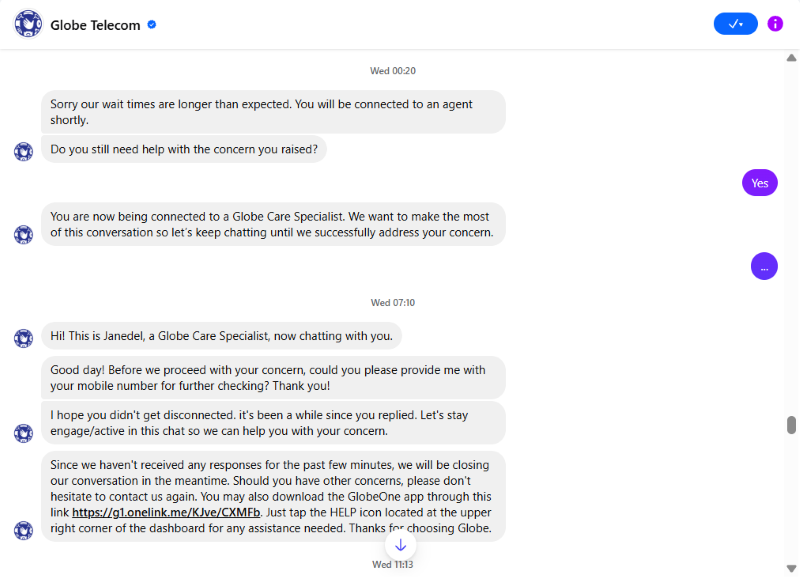

To reiterate, I did eventually get my answer. But it took six tries and 48 hours. And I had to stop everything I was doing while chatting with the agent who eventually helped me.

The entire interaction took 40 minutes, by the way.

If you look away for just a few minutes, they’ll terminate the conversation. They don’t need much of an excuse. You have to give them 100% attention – no working, cooking or doing anything else – or they’ll kick you and you have to start again.

I had Messenger open on a second screen, as well as my phone, to make sure attempt number six was a success.

Intentionally Dishonest

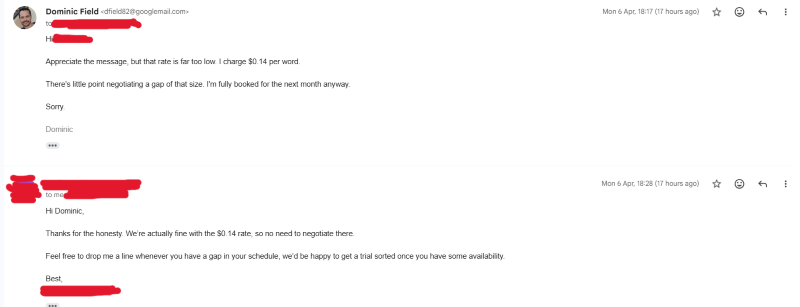

What gets me is the blatant dishonesty of it all. You give them everything they need to answer the question, and they don’t even try.

Instead, the agents pose nonsense questions to you, hoping you’ve temporarily gone offline, or have been distracted by something else. This gives them an excuse to terminate the chat.

And this isn’t a telephone call, by the way. It’s a conversation on Facebook Messenger. Why is the chat even being terminated at all? It could be open for 20 years and it would make no difference. Make it make sense.

Dirty Tricks

Here are some of the reasons Globe refused to answer my question:

- They wanted to know whether I was on a pre-paid or post-paid plan. Does that change the countries on the list? No, but they ended the chat when I didn’t reply quickly enough, in their opinion.

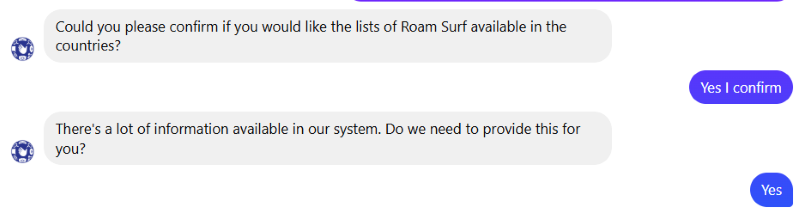

- I was in bed. Having reached out around midnight, they said wait times are longer than expected and asked if I still needed help. I said yes. They eventually connected me at 7.10am, long after I’d given up and gone to bed. That chat ended ten minutes after it began, when I was very obviously offline. Could they not have just answered my question, instead of pretending they needed a response from me first? They could, but would require a genuine desire to provide support.

- They wanted my phone number first. Does my number affect the list of Roam Surf countries? No, but they terminated the conversation anyway. Even though I’d already provided it to the FUCKING chatbot before I ever reached a human.

More Shithouse Behaviour

By now, I’ve cottoned on to the fact that they’re looking to ask questions at all times. They don’t want to solve anything that takes them off script, regardless of how easy it would be. So it all becomes a game of cat and mouse.

The aim is to get you off the line, however they can. If they resolve the problem, sure, that’s one way. But that takes effort. A better way is to ask you a question – literally anything at all – and hope you get distracted.

If you don’t reply in time, they cut you off.

The most common trick is to repeat the question, then say something like “can you confirm I’ve understood correctly?” – if you don’t reply, you’re gone.

They did this to me four times during the interaction where I eventually got my answer. But I wasn’t letting the slippery fuckers off the hook this time.

Seriously Globe – go and fuck yourselves. All the way off, and then some more.

Corporate Bullshit

So, why can’t they just take the time to answer the question properly? I’ll tell you why. KPIs and corporate cocksucking.

Customer service teams are only ever interested in checking boxes and meeting nonsense targets. By automatically ending the conversation after half a microsecond, they’re keeping the average customer interaction time nice and low.

When the manager has their quarterly review, they can show the CEO how quick they are at dealing with queries. Everyone can pat themselves on the back and jerk each other off about what a wonderful job they’re all doing.

Are the customers actually being assisted? No, but who cares? That was never the point. We’ve met the target for interaction times, so we all get bonuses. FUCK the customer.

Companies Just Don’t Care

This angers me for two reasons. First, I’m fucking sick of getting shit service from every company on Earth. But secondly, there’s no excuse for not wanting to improve your products and user journeys.

Why don’t they have pride in their work and the service they provide any more?

Before I switched to freelancing, I gained a lot of gambling industry experience, working for some really big organisations, in pretty senior positions. I always tried my best to use and understand the products I put out there, so I could identify problems in the customer journey.

Instead of sitting in my nice air conditioned office all day, I would go to the coalface and talk to customers and cashiers, asking what challenges they faced and how we could improve the product.

Nobody seems to do this any more. Nobody gives a shit. Did we meet our targets? Cool, bonuses and handjobs all round. Screw the users.

Rotten Culture

But targets are only being met because customers can’t actually get their complaints across. Companies have no idea where the faults are, because consumers aren’t able to report them. We’re not being heard.

Lazy support agents pulling tricks like those at Globe don’t help. But honestly, having worked in Filipino companies for a long time now, I doubt it would matter if they fed this stuff up to the middle management anyway.

They’re so protective of their own positions, they’d never risk rocking the boat by actually reporting problems to the big bosses.

List of Globe Roam Surf Countries

If you discovered this post because Globe is a telecommunications company that can’t fucking communicate, here’s the answer to your question.

As of May 14th 2026, the following countries are eligible for Roam Surf:

Albania, Anguilla, Antigua and Barbuda, Argentina, Aruba, Australia, Austria, Bahrain, Bangladesh, Barbados, Belgium, Bermuda, Brazil, British Virgin Islands, Brunei, Bulgaria, Burkina Faso, Cambodia, Canada, Cayman Islands, Chad, Chile, China, Colombia, Costa Rica, Croatia, Czech Republic, Democratic Republic of Congo, Denmark, Dominica, Dominican Republic, Ecuador, Egypt, El Salvador, Estonia, Fiji, Finland, France, French West Indies, Gabon, Germany, Ghana, Greece, Grenada, Guam, Guatemala, Guyana, Haiti, Honduras, Hong Kong, Hungary, Iceland, India, Indonesia, Ireland, Israel, Italy, Jamaica, Japan, Jordan, Kazakhstan, Kenya, Korea, Kuwait, Kyrgyzstan, Laos, Latvia, Liechtenstein, Lithuania, Luxembourg, Macau, Madagascar, Malaysia, Maldives, Mexico, Mongolia, Myanmar, Netherlands, Netherlands Antilles, New Zealand, Nigeria, Norway, Oman, Pakistan, Panama, Papua New Guinea, Paraguay, Peru, Poland, Portugal, Qatar, Romania, Russia, Saipan (Northern Mariana Islands), San Marino, Saudi Arabia, Singapore, South Africa, Spain, Sri Lanka, Taiwan, Thailand, Turkey, UAE, Uganda, United Kingdom, Uruguay, USA, Vanuatu, Vatican City and Vietnam.